Short Notes: Transport Layer

Inner workings to the Transport Layer, the 'TCP' of TCP/IP protocol suite.

Transport Layer

Transport Layer provides logical communication between application processes running on different hosts. This is required specifically when these hosts are on different networks. Network layer provides logical communication between hosts whereas Transport layer provides logical communication between processes.

Transport protocol runs on end systems. On the sender’s side it breaks the application layer messages into segments, adds some transport layer information and passes it to network layer. On the receiver side, it re-assembles segments into messages by stripping transport layer headers, and then passes it to the application layer. The hosts use IP addresses & port numbers to direct segment to appropriate socket.

There are 2 main transport layer protocols:

-

Connectionless Transport Protocol, which only depends on the source and destination ports. IP datagrams with same destination port number, but different source IP addresses and/or source port numbers will be directed to same socket at destination. UDP is an example of such a protocol.

-

Connection Oriented Protocol, in which the “connection” between hosts is identified by a 4-tuple: The source IP address, source port number, destination IP address and the destination port number. TCP is an example of such protocol. Server host may support many simultaneous TCP socket connections, and each socket connection is identified by its own 4-tuple.

UDP

UDP or User Datagram Protocol is a connectionless protocol. It is also referenced as SOCK_DGRAM. Since it is connectionless, it is inherently unreliable as there is no acknowledgement. UDP is a best effort service. Order is also not guranteed. UDP also does not provide flow or congestion control, any timing or throughput guarantees or security. UDP though does provides integrity verification (via checksum) of the header and payload. As a result, UDP requires less bandwidth as compared to TCP.

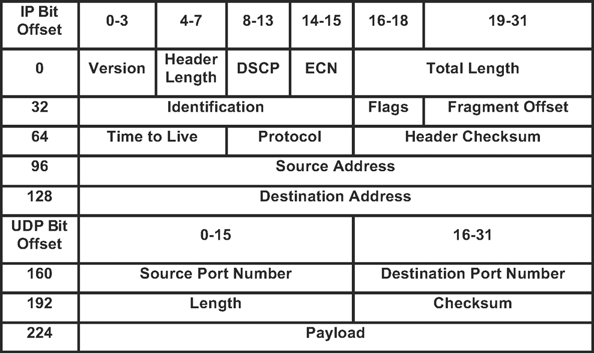

UDP Segment

- UDP port field is only 16 bits long. This means that there are up to 64K possible ports.

- The basic UDP checksum algorithm is the same one used for IP, that is, it adds up a set of 16-bit words using ones’ complement arithmetic and takes the ones’ complement of the result.

- The UDP checksum takes as input the UDP header, the contents of the message body, and something called the pseudoheader. The pseudoheader consists of three fields from the IP header: protocol number, source IP address, and destination IP address plus the UDP length field. (The UDP length field is included twice in the checksum calculation). The motivation behind having the pseudoheader is to verify that this message has been delivered between the correct two endpoints.

Transmission Control Protocol (TCP)

TCP, unlike UDP is a Connection Oriented Protocol. It provides mechanism for reliable delivery, and a mechanism for ordering out of order packets. TCP also handles congestion control and error checking for segments. It is a full-duplex protocol, meaning that each TCP connection supports a pair of byte streams, one flowing in each direction.

For reliability of segment delivery, a “connection” is needed, and a way of “acknowledgements” of segments are needed. TCP requires a 3-way handshake to setup a reliable connection.

Another important feature that TCP provides is “Flow control”, which involves preventing senders from over-running the capacity of receivers. Similarly, another feature known as “Congestion control” involves preventing too much data from being injected into the network, thereby causing switches or links to become overloaded. Thus, flow control is an end-to-end issue, while congestion control is concerned with how hosts and networks interact.

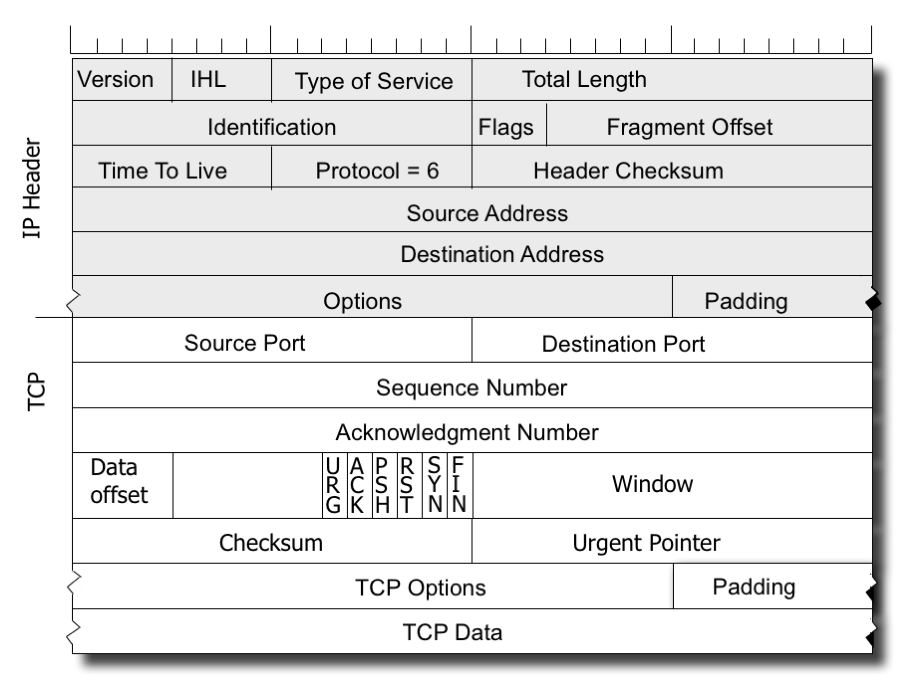

TCP Segment

Following are some of the important fields/flags in the TCP header:

-

Synchronization (SYN): It is used in first step of connection establishment phase or 3-way handshake process. Only the first packet from sender as well as receiver should have this flag set. This is used for synchronizing sequence number i.e. to tell the other end which sequence number they should accept.

-

Acknowledgement (ACK): It is used to acknowledge packets which are successful received by the host. The flag is set if the acknowledgement number field contains a valid acknowledgement number.

-

Finish (FIN): It is used to request for connection termination. This is the last packet sent by sender. It frees the reserved resources and gracefully terminate the connection.

-

Reset (RST): It is used to terminate the connection if the RST sender feels something is wrong with the TCP connection or that the conversation should not exist. It can get send from receiver side when packet is send to particular host that was not expecting it.

-

Urgent (URG): It is used to indicate that the data contained in the packet should be prioritized and handled urgently by the receiver. This flag is used in combination with the Urgent Pointer field to identify the location of the urgent data in the packet.

-

Push (PSH): It is used to request immediate data delivery to the receiving host, without waiting for additional data to be buffered on the sender’s side. This flag is commonly used in applications such as real-time audio or video streaming.

-

Window (WND): It is used to communicate the size of the receive window to the sender. The sender should limit the amount of data it sends based on the size of the window advertised by the receiver.

-

Checksum (CHK): It is used to verify the integrity of the TCP segment during transmission. It is recalculated at each hop along the network path.

-

Sequence Number (SEQ): It is a unique number assigned to each segment by the sender to identify the order in which packets should be received by the receiver.

-

Acknowledgement Number (ACK): It is used to acknowledge the receipt of a TCP segment and to communicate the next expected sequence number to the sender. The acknowledgement number field contains the sequence number of the next expected segment. This is a cumulative ACK.

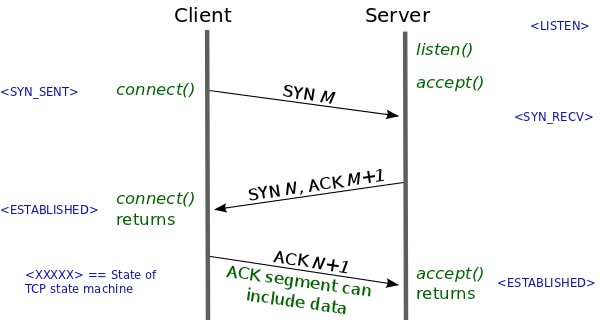

Three way Handshake

The three-way handshake is initiated whenever a client makes a connection request to a server. At the application level, this corresponds to a client performing a connect() system call to establish a connection with a server that has previously bound a socket to a well-known address and then called accept() to receive incoming connections.

During this three-way handshake, the two TCP end-points exchange SYN (synchronize) segments containing options that govern the subsequent TCP conversation like maximum segment size (MSS), initial sequence numbers (ISNs) that each end-point selects for the conversation (labeled M and N in Figure).

In the (unlikely) event that the initial SYN is duplicated, then the three-way handshake allows the duplication to be detected, so that only a single connection is created.

TCP Fast Open (TFO)

The aim of TFO is to eliminate one round trip time from a TCP conversation by allowing data to be included as part of the SYN segment that initiates the connection. Theoretically, the initial SYN segment could contain data sent by the initiator of the connection: RFC 793, the specification for TCP, does permit data to be included in a SYN segment. However, TCP is prohibited from delivering that data to the application until the three-way handshake completes. This is a necessary security measure to prevent various kinds of malicious attacks. For example, if a malicious client sent a SYN segment containing data and a spoofed source address, and the server TCP passed that segment to the server application before completion of the three-way handshake, then the segment would both cause resources to be consumed on the server and cause (possibly multiple) responses to be sent to the victim host whose address was spoofed.

TFO is designed to do this in such a way that the security concerns described above are addressed:

- Servers employing TFO must be idempotent. They must tolerate the possibility of receiving duplicate initial SYN segments containing the same data and produce the same result regardless of whether one or multiple such SYN segments arrive.

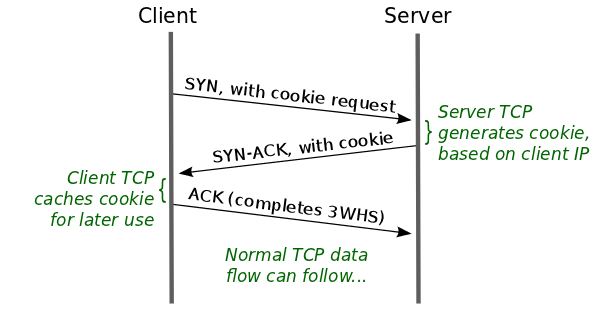

- In order to prevent the aforementioned malicious attacks, TFO employs security cookies (TFO cookies). The TFO cookie is generated once by the server TCP and returned to the client TCP for later reuse. The cookie is constructed by encrypting the client IP address in a fashion that is reproducible (by the server TCP) but is difficult for an attacker to guess. Request, generation, and exchange of the TFO cookie happens entirely transparently to the application layer.

- The client requests a TFO cookie by sending a SYN segment to the server that includes a special TCP option asking for a TFO cookie.

- In response, the server generates a TFO cookie that is returned in the SYN-ACK segment that the server sends to the client. The client caches the TFO cookie for later use.

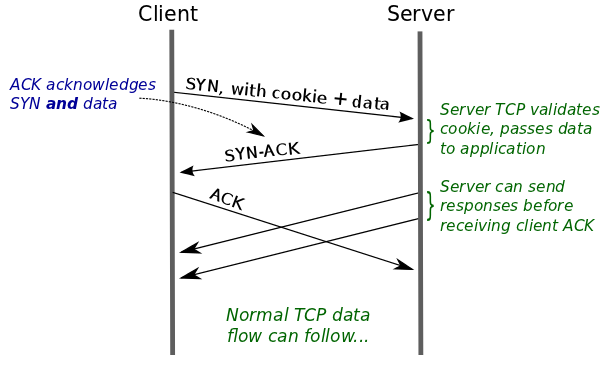

For subsequent conversations with the server, the client can short circuit the three-way handshake, as shown in figure below:

- The client TCP sends a SYN that contains both the TFO cookie (specified as a TCP option) and data from the client application.

- The server TCP validates the TFO cookie by duplicating the encryption process based on the source IP address of the new SYN. If the cookie proves to be valid, then the server TCP can be confident that this SYN comes from the address it claims to come from. This means that the server TCP can immediately pass the application data to the server application. If the TFO cookie proves not to be valid, then the server TCP discards the data and sends a segment to the client TCP that acknowledges just the SYN.

- From here on, the TCP conversation proceeds as normal: the server TCP sends a SYN-ACK segment to the client, which the client TCP then acknowledges, thus completing the three-way handshake. The server TCP can also send response data segments to the client TCP before it receives the client’s ACK.

TCP Reliable data transfer

TCP guarantees reliable delivery of the data and it ensures that data is delivered in order. To achieve this, TCP uses Sliding Window Algorithms. TCP’s use of the sliding window algorithms is the same as at the link layer level. Where TCP differs from the link-level algorithm is that it folds the flow-control function in as well. In particular, rather than having a fixed-size sliding window, the receiver advertises a window size to the sender.

Sliding window algorithm

The sliding window is a variable-sized buffer that represents the available space in the sender’s or the receiver’s end of the connection. The sender can only send data that fits within the window, and the receiver can only accept data that fits within the window.

Stop and Wait:

- The sender sends the packet and waits for the acknowledgement of the packet.

- Once the acknowledgement reaches the sender, it transmits the next packet in a row.

- If the acknowledgement does not reach the sender before the specified time, known as the timeout, the sender sends the same packet again.

- It is a case of the general sliding window protocol with the transmit window size of 1 and receive window size of 1

- This is slow in nature and is not very efficient.

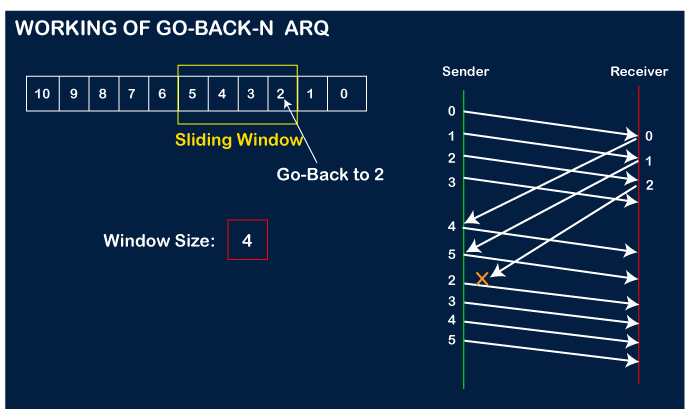

Go-back-N:

- In GBN, the sender can have up to N unacked packets in pipeline.

- It is a case of the general sliding window protocol with the transmit window size of N and receive window size of 1. It can transmit N frames to the peer before requiring an ACK.

- The receiver only sends Cumulative ACKs, and it doesn’t ack the segment if there’s a gap, i.e missing sequence segment.

- Sender has a timer for the oldest unacked packet.

- When this timer expires, the sender retransmits all the unacked packets, starting from the oldest lost packet.

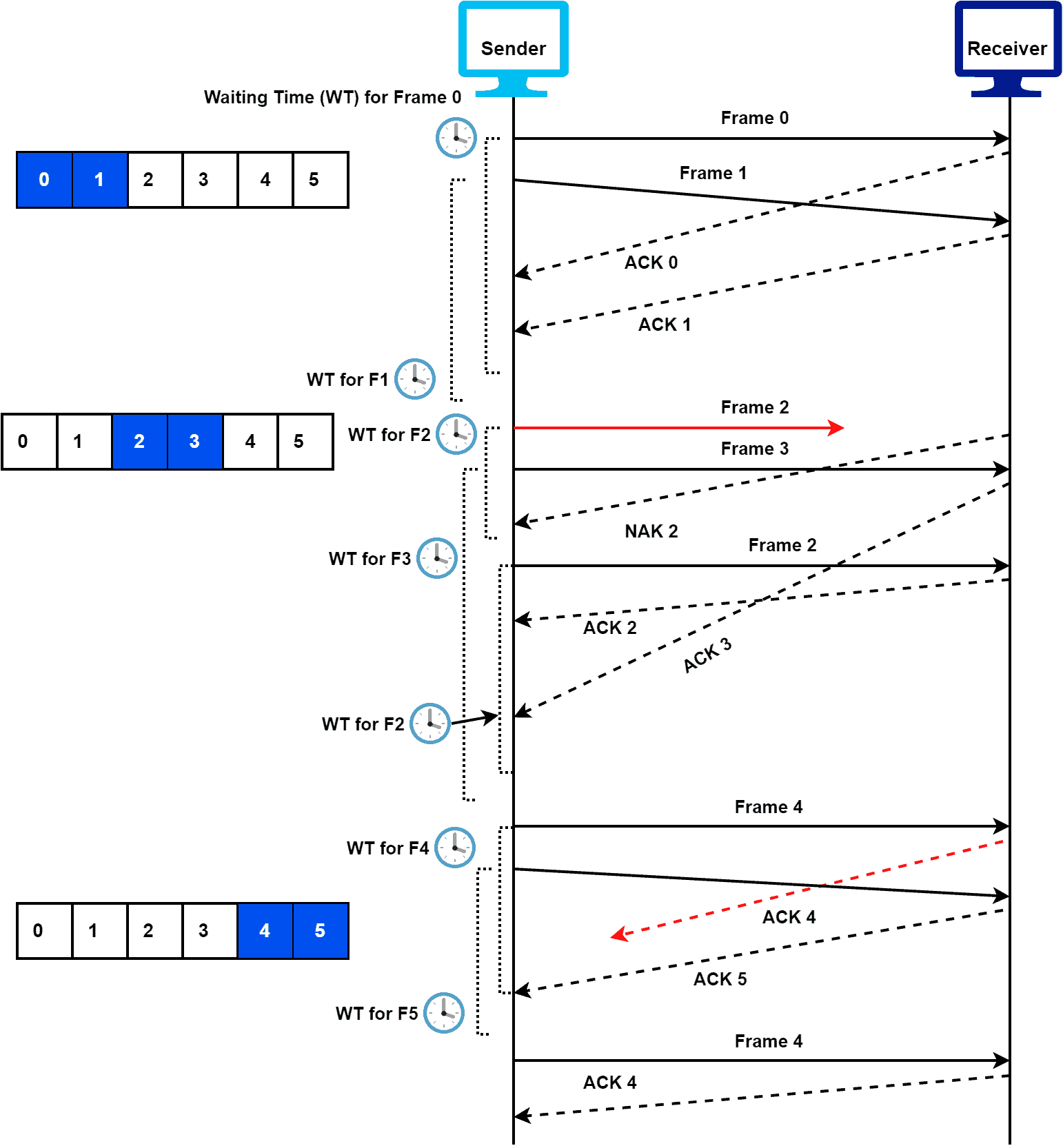

Selective Repeat:

- In Selective Repeat, the sender can have up to N unack’ed packets in the buffer/pipeline.

- It is a case of the general sliding window protocol with the transmit window size of N and receive window size of N as well.

- The reciever sends individual ACKs for each packet.

- The receiver maintains a buffer to contain out-of-order packets and sorts them later on.

- Sender maintains a timer for each unacked packet.

- When the timer expires, sender retransmits only the unacked packets.

MTU

- MTU or Maximum transmission unit is the largest number of bytes of PDU(Protocol Data Unit) that can be sent in a single packet. Default value of MTU in IPv4 is 1500 bytes and for IPv6, it is 1280 bytes.

- Ethernet specification specifies 1500 bytes for 10M-10G networks. Maximum possible Packet length: 16535 (16 bits), ethernet fragments this into many packets.

Tradeoffs:

- A larger MTU brings greater efficiency because each network packet carries more user data while protocol overheads remain fixed; the resulting higher efficiency means an improvement in bulk protocol throughput.

- A larger MTU also requires processing of fewer packets for the same amount of data. In some systems, per-packet-processing can be a critical performance limitation.

- However, this gain is not without a downside. Large packets occupy a link for more time than a smaller packet, causing greater delays to subsequent packets, and increasing network delay and delay variation.

- Large packets are also problematic in the presence of communications errors. The corruption of a single bit in a packet requires that the entire packet be retransmitted, which can be costly. Their greater payload makes retransmissions of larger packets take longer and wastes network bandwidth.

Sockets

- Sockets are an IPC mechanism, applications can create them.

- Sockets enable comminucation between applications across a network, both client and server must be connected to the same socket before communication can begin. Once connected, they can use

read()andwrite()to communicate. - The sending process relies on transport layer infrastructure on other side of the connection to deliver the message to the socket at receiving process.

- Intel/AMD CPUs are Little Endian. TCP/IP has standardized on Big Endian for all network integer numbers, there are helper functions which translate these addresses.

TCP - SYN Flood attack

SYN flood (half-open attack) is a type of denial-of-service attack which aims to make a server unavailable to legitimate traffic by consuming all available server resources. By repeatedly sending initial connection request (SYN) packets, the attacker is able to overwhelm all available ports on a targeted server machine, causing the targeted device to respond to legitimate traffic sluggishly or not at all.

SYN flood attacks work by exploiting the handshake process of a TCP connection. To create denial-of-service, an attacker exploits the fact that after an initial SYN packet has been received, the server will respond back with one or more SYN/ACK packets and wait for the final step in the handshake.

- The attacker sends a high volume of

SYNpackets to the targeted server, often with spoofed IP addresses. - The server then responds to each one of the connection requests and leaves an open port ready to receive the response.

- While the server waits for the final

ACKpacket, which never arrives, the attacker continues to send more SYN packets. The arrival of each new SYN packet causes the server to temporarily maintain a new open port connection for a certain length of time, and once all the available ports have been utilized the server is unable to function normally.

The targeted server is continuously leaving open connections and waiting for each connection to timeout before the ports become available again. The result is that this type of attack can be considered a “half-open attack”.

Mitigation

-

Increasing Backlog queue: One response to high volumes of SYN packets is to increase the maximum number of possible half-open connections the operating system will allow. For that, the system must reserve additional memory resources to deal with all the new requests.

-

Recycling the Oldest Half-Open TCP connection: Another mitigation strategy involves overwriting the oldest half-open connection once the backlog has been filled. This particular defense fails when the attack volume is increased, or if the backlog size is too small to be practical.

-

SYN cookies: This strategy involves the creation of a cookie by the server. In order to avoid the risk of dropping connections when the backlog has been filled, the server responds to each connection request with a SYN-ACK packet but then drops the SYN request from the backlog, removing the request from memory and leaving the port open and ready to make a new connection. If the connection is a legitimate request, and a final ACK packet is sent from the client machine back to the server, the server will then reconstruct (with some limitations) the SYN backlog queue entry. While this mitigation effort does lose some information about the TCP connection, it is better than allowing denial-of-service to occur to legitimate users as a result of an attack.

Share this post

Twitter

Facebook

Reddit

LinkedIn

Email